LLM-Based OCR vs Traditional OCR: What Actually Works in 2026

Key Takeaways

- LLM/VLM-based extraction outperforms traditional OCR on complex layouts, tables, and handwriting — but costs 2–5x more per page at scale ([Mindee](https://www.mindee.com/blog/llm-vs-ocr-api-cost-comparison)).

- Gemini Flash 2.0 is a price-performance outlier: **6,000 pages for $1** with near-frontier accuracy ([Vellum](https://www.vellum.ai/blog/document-data-extraction-llms-vs-ocrs)).

- Traditional OCR (Textract, Tesseract, Document AI) still wins for high-volume, structured documents where layout is consistent.

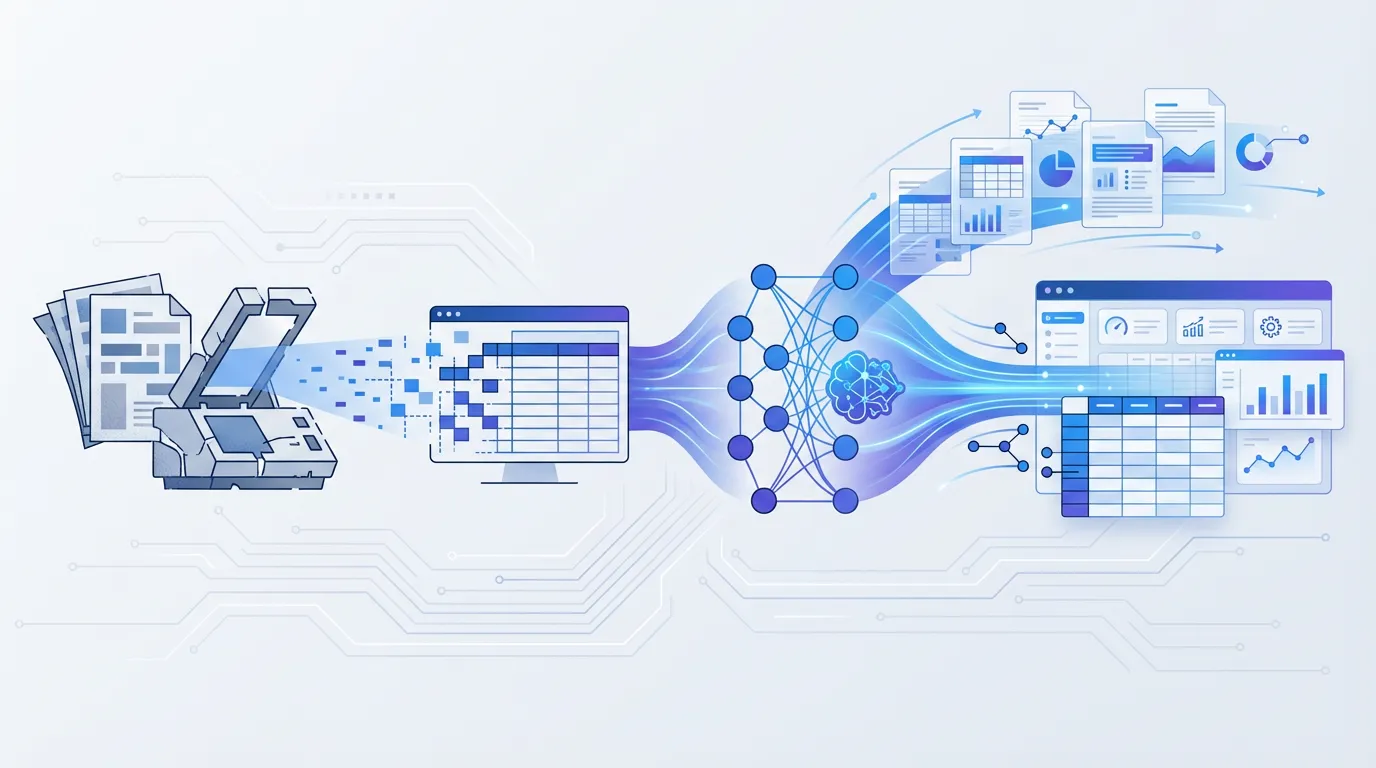

- The real production answer is often a hybrid: OCR for text extraction, LLM for field identification and reasoning.

What's Changed: Traditional OCR vs LLM-Based OCR

Traditional OCR (Tesseract, Textract, Document AI)

Traditional [OCR](/guides/ocr-data-capture) works in two stages: **detect text regions** in an image, then **recognize characters** within those regions. Tesseract uses LSTM networks. Textract and Document AI add proprietary ML layers on top for table and form detection. All three output raw text or bounding-box coordinates — they tell you *what characters are on the page*, but not *what those characters mean*.

The fundamental limitation: traditional OCR produces text. Turning that text into structured data (vendor name, invoice total, line items) requires a separate extraction layer — either regex rules, template zones, or a classifier you train yourself.

LLM/VLM-Based Extraction (GPT-4o, Gemini 2.5 Pro, Claude)

Multimodal large language models take a fundamentally different approach. You send the document image (or PDF) directly to the model. It "sees" the page the way a human would — understanding layout, context, and semantics simultaneously. You prompt it with a schema ("extract vendor_name, invoice_number, total_amount") and it returns structured JSON.

No OCR preprocessing step. No template configuration. No regex. The model handles text recognition, layout understanding, and field extraction in a single pass.

This is the shift that has developers on r/dataengineering and r/Rag reconsidering their entire document pipeline.

Accuracy Benchmarks: Head-to-Head

Let's cut through the marketing and look at actual numbers from independent benchmarks.

Printed Text on Clean Documents

On high-quality, digitally-created PDFs, the gap between approaches is narrow:

- **Tool:** GPT-4o | **Accuracy:** 98% | **Notes:** Text-based PDF invoices ([Koncile](https://www.koncile.ai/en/ressources/claude-gpt-or-gemini-which-is-the-best-llm-for-invoice-extraction))

- **Tool:** Claude 3.5 Sonnet | **Accuracy:** 97% | **Notes:** Text-based PDF invoices ([Koncile](https://www.koncile.ai/en/ressources/claude-gpt-or-gemini-which-is-the-best-llm-for-invoice-extraction))

- **Tool:** Gemini 2.5 Pro | **Accuracy:** 96% | **Notes:** Text-based PDF invoices ([Koncile](https://www.koncile.ai/en/ressources/claude-gpt-or-gemini-which-is-the-best-llm-for-invoice-extraction))

- **Tool:** AWS Textract | **Accuracy:** 95%+ | **Notes:** Clean printed text, forms ([AWS docs](https://aws.amazon.com/textract/))

- **Tool:** Tesseract 5 | **Accuracy:** >95% | **Notes:** Clean printed text only ([Koncile](https://www.koncile.ai/en/ressources/is-tesseract-still-the-best-open-source-ocr))

- **Tool:** Google Document AI | **Accuracy:** 95%+ | **Notes:** Pre-trained invoice processor ([Google Cloud](https://cloud.google.com/document-ai))

**Takeaway:** For clean, printed documents, traditional OCR is still accurate enough. The LLM advantage is marginal here.

Scanned Documents and Poor-Quality Inputs

This is where the gap widens. Scanned invoices, faxes, photos from phones, and low-DPI images break traditional OCR hard. LLMs handle degraded input far better because they use visual context — not just pixel-level character recognition — to infer what text says.

- **Tool:** Gemini 2.5 Pro | **Accuracy on Scanned Invoices:** **94%** | **Source:** [Koncile benchmark](https://www.koncile.ai/en/ressources/claude-gpt-or-gemini-which-is-the-best-llm-for-invoice-extraction)

- **Tool:** GPT-4o + OCR | **Accuracy on Scanned Invoices:** 91% | **Source:** [Koncile benchmark](https://www.koncile.ai/en/ressources/claude-gpt-or-gemini-which-is-the-best-llm-for-invoice-extraction)

- **Tool:** Claude 3.5 Sonnet | **Accuracy on Scanned Invoices:** 90% | **Source:** [Koncile benchmark](https://www.koncile.ai/en/ressources/claude-gpt-or-gemini-which-is-the-best-llm-for-invoice-extraction)

- **Tool:** AWS Textract | **Accuracy on Scanned Invoices:** 82% (line-item extraction) | **Source:** [BusinessWareTech](https://www.businesswaretech.com/blog/research-best-ai-services-for-automatic-invoice-processing)

- **Tool:** Tesseract 5 | **Accuracy on Scanned Invoices:** 80–85% (with preprocessing) | **Source:** [Extend](https://www.extend.ai/resources/pytesseract-guide-ocr-limits-alternatives)

- **Tool:** Google Document AI | **Accuracy on Scanned Invoices:** 40% (table extraction) | **Source:** [Gartner IDP benchmark](https://www.gartner.com/reviews/market/intelligent-document-processing-solutions)

**Takeaway:** On scanned documents, LLMs win by 10–15 percentage points. Google Document AI's table parser has a known weakness on complex purchase order layouts.

Table Extraction

Tables are the hardest problem in document extraction. Merged cells, multi-line rows, nested headers, and variable column widths break every traditional tool at some point.

According to the [OmniAI benchmark](https://getomni.ai/blog/ocr-benchmark), open-source VLM PaddleOCR-VL scores **92.86 on OmniDocBench** versus GPT-4o's **85.80** on document parsing that includes tables. Gemini 2.5 Pro achieved **near-perfect table extraction accuracy** in BusinessWareTech tests, though with higher latency.

**Takeaway:** For table-heavy documents ([PDF table extraction](/tools/pdf-table-extractor) from financial reports, purchase orders, BOLs), LLMs consistently outperform traditional OCR. But open-source VLMs like PaddleOCR-VL can beat closed-source LLMs while running locally.

Handwriting

Modern LLMs have made handwriting recognition usable for the first time at production quality:

- **GPT-5:** 95% on handwriting benchmarks

- **olmOCR-2-7B:** 94% (open source)

- **Gemini 2.5 Pro:** 93%

- **Tesseract:** Poor results on cursive; limited to near-printed handwriting

If you need [handwriting extraction](/tools/handwriting-to-text), LLMs are the only practical option in 2026.

Cost Per Page: The Uncomfortable Math

Accuracy is only half the equation. Here's what each approach actually costs at scale.

- **Approach:** Tesseract (self-hosted) | **Cost per 1,000 pages:** ~$0 (compute only) | **Best for:** Budget-constrained, clean documents

- **Approach:** AWS Textract (text only) | **Cost per 1,000 pages:** $1.50 | **Best for:** Simple text extraction at scale

- **Approach:** AWS Textract (tables + forms) | **Cost per 1,000 pages:** $15–$65 | **Best for:** Structured form extraction

- **Approach:** Google Document AI (Form Parser) | **Cost per 1,000 pages:** $65 | **Best for:** Form and invoice extraction

- **Approach:** GPT-4o (direct image) | **Cost per 1,000 pages:** $100–$500+ | **Best for:** Complex, variable documents

- **Approach:** Gemini 2.5 Pro | **Cost per 1,000 pages:** $10–$50 | **Best for:** High accuracy on complex docs

- **Approach:** **Gemini Flash 2.0** | **Cost per 1,000 pages:** **~$0.17** | **Best for:** Price-performance sweet spot

- **Approach:** PaddleOCR-VL (self-hosted) | **Cost per 1,000 pages:** ~$0.09 | **Best for:** Maximum accuracy per dollar

- **Approach:** Parsli (managed SaaS) | **Cost per 1,000 pages:** $80 (Starter: 250 pages) | **Best for:** No-code, production-ready pipeline

Sources: [AWS pricing](https://aws.amazon.com/textract/pricing/), [Google Cloud pricing](https://cloud.google.com/document-ai/pricing), [Mindee analysis](https://www.mindee.com/blog/llm-vs-ocr-api-cost-comparison), [Vellum](https://www.vellum.ai/blog/document-data-extraction-llms-vs-ocrs), [OmniAI](https://getomni.ai/blog/ocr-benchmark).

A few things jump out:

**Gemini Flash 2.0 broke the cost curve.** At roughly $0.17 per 1,000 pages, it's cheaper than Textract's basic text extraction while delivering multimodal understanding. [Vellum's analysis](https://www.vellum.ai/blog/document-data-extraction-llms-vs-ocrs) confirmed **6,000 pages for $1** with near-frontier accuracy. This single model has shifted the calculus for many teams.

**GPT-4o is expensive at scale.** Token-based pricing on multi-page documents adds up fast. A 10-page contract can consume 20,000+ tokens per extraction. [Mindee](https://www.mindee.com/blog/llm-vs-ocr-api-cost-comparison) found LLMs can be **5x more expensive than OCR APIs** for high-volume structured workflows.

**Self-hosted VLMs are the cheapest high-accuracy option** — if you have GPU infrastructure and ML engineering capacity. PaddleOCR-VL at $0.09/1,000 pages with 92.86% accuracy is hard to beat on raw economics. The trade-off is infrastructure management.

See Parsli in Action

Click through the interactive tour — from creating a parser to extracting structured data from a scanned receipt.

See Parsli in Action

Parsli extracts structured data from PDFs, invoices, and emails — automatically. Free forever up to 30 pages/month.

No credit card required.

When Traditional OCR Still Wins

LLMs aren't universally better. Traditional OCR has real advantages in specific scenarios:

High-volume, consistent layouts

If you're processing 100,000 utility bills a month and they all come from the same 5 providers with identical layouts, template-based extraction with Textract or [Docparser](/compare/docparser) is faster, cheaper, and more predictable than an LLM.

Latency-critical pipelines

Tesseract processes a page in 50–200ms locally. Textract returns results in 1–3 seconds. GPT-4o takes 5–15 seconds per page, and Gemini 2.5 Pro can take 10–30 seconds on complex documents. If your pipeline needs sub-second extraction, traditional OCR wins.

Deterministic output

Traditional OCR gives you the same output for the same input, every time. LLMs are probabilistic — the same document can produce slightly different JSON structures across runs. For compliance-sensitive workflows in [finance](/industries/finance) or [healthcare](/industries/healthcare), this non-determinism is a real concern.

Budget constraints with high volume

At 500,000+ pages per month, Textract's $0.0015/page for basic text detection is $750/month. Running that through GPT-4o could be $50,000+. The cost gap is massive at high scale — unless you're using Gemini Flash.

When LLMs Are the Right Choice

Variable document layouts

This is the LLM killer feature. When every vendor sends a different invoice format, every bank has a different statement layout, and you can't predict what documents will look like tomorrow — LLMs handle the variation without template maintenance. This is exactly why tools like Parsli use [Gemini 2.5 Pro for AI extraction](/solutions/no-code-document-parser): zero template configuration for any document layout.

Complex table structures

Multi-level headers, merged cells, footnotes that reference specific rows, tables that span multiple pages — LLMs parse these far better than traditional table extraction. If your workflow involves [extracting tables from PDFs](/guides/extract-tables-from-pdf) with complex structures, LLMs are the way to go.

Semantic field extraction

Traditional OCR tells you "7,290.00" appears at coordinates (450, 680). An LLM tells you that's the `total_amount` on an invoice from `Acme Supply Co.` dated `2026-03-15`. The difference between "where is the text" and "what does the text mean" is the core advantage.

Documents with mixed content

Pages that combine printed text, handwriting, stamps, logos, tables, and charts in a single document are nightmares for traditional OCR. LLMs process the entire page as a visual scene, extracting meaning from context regardless of content type.

The Hybrid Approach: What Production Teams Actually Do

Most production pipelines in 2026 don't use pure LLM or pure OCR. They combine both:

1. **OCR first** — Run Textract or Tesseract to extract raw text cheaply and fast. 2. **LLM second** — Pass the extracted text (not the image) to an LLM for field identification, validation, and structured output. Text-mode LLM calls cost a fraction of vision-mode calls. 3. **Vision fallback** — For documents where OCR fails (poor scans, handwriting), fall back to multimodal LLM with the document image directly.

This hybrid approach gives you Textract-level cost on 80% of documents and LLM-level accuracy on the 20% that need it. It's the architecture behind most managed extraction platforms, including Parsli's pipeline, which uses Gemini 2.5 Pro's multimodal capabilities to handle both clean and degraded documents in a single API call.

If you want this hybrid approach without building it yourself, Parsli's [document parsing API](/solutions/document-parsing-api) or [no-code platform](/solutions/no-code-document-parser) wraps the complexity into a simple per-page pricing model.

The Agentic Future: Beyond Both OCR and LLMs

The next shift is already happening. [Gartner predicts](https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025) **40% of enterprise apps will feature task-specific AI agents by end of 2026**, up from less than 5% in 2025.

In the context of document processing, this means systems that don't just extract data — they reason about it. An agentic extraction pipeline can:

- Cross-reference an invoice total against a purchase order to flag discrepancies

- Detect that a vendor's bank details changed from the last invoice (potential fraud signal)

- Route documents to different workflows based on content, not file name

- Self-correct extraction errors by validating fields against business logic

This is the direction [intelligent document processing](/guides/what-is-intelligent-document-processing) is heading. Our guide on [agentic document extraction](/guides/agentic-document-extraction) covers the architecture in more detail.

Frequently Asked Questions

Is LLM-based OCR more accurate than Textract?

For structured field extraction on scanned or complex documents, yes. [Recent benchmarks](https://www.businesswaretech.com/blog/research-best-ai-services-for-automatic-invoice-processing) show GPT-4o scoring **90.5% on line-item extraction** versus Textract's **82%** on the same invoice dataset. For simple text detection on clean documents, the accuracy gap is negligible.

How much does LLM-based document extraction cost per page?

It varies enormously by model. Gemini Flash 2.0 costs roughly **$0.17 per 1,000 pages** — cheaper than Textract. GPT-4o can cost **$0.10–$0.50+ per page** depending on document length. Self-hosted open-source VLMs like PaddleOCR-VL cost approximately **$0.09 per 1,000 pages** in compute. Parsli's managed service starts at $0.08/page on the Starter plan, handling all the infrastructure complexity for you.

Can I replace Tesseract with an LLM in production?

For clean printed text at high volume, Tesseract is still faster and cheaper. For scanned documents, variable layouts, tables, or handwriting — yes, LLMs are a significant upgrade. Many teams run a hybrid: Tesseract for initial text extraction, then an LLM for field identification.

Do LLMs hallucinate when extracting document data?

Yes, this is a real risk. LLMs can occasionally fabricate field values that don't exist in the document, especially on low-confidence extractions. Production systems mitigate this with confidence scoring, schema validation, and human-in-the-loop review for flagged documents. Parsli's extraction assigns confidence scores to every field so you can set thresholds for auto-approval vs. manual review.

Should I self-host an open-source VLM or use a managed API?

Self-hosting (PaddleOCR-VL, olmOCR, Docling) gives you the lowest per-page cost and full data control. But you're responsible for GPU infrastructure, model updates, scaling, and monitoring. [Managed APIs](/integrations/api) (Textract, Document AI, Parsli) cost more per page but eliminate operational overhead. The break-even typically favors self-hosting above ~50,000 pages/month with a dedicated ML engineer on staff.

What about Google Document AI — is it traditional OCR or LLM-based?

Document AI sits in between. Its pre-trained processors use specialized ML models (not general-purpose LLMs) tuned for specific document types. It's more capable than Tesseract but less flexible than a multimodal LLM. Its main weakness is table extraction on complex layouts — benchmarks show as low as [40% accuracy on difficult table datasets](https://www.gartner.com/reviews/market/intelligent-document-processing-solutions). For a detailed comparison, see [Parsli vs Google Document AI](/compare/google-document-ai).

Going Further

- [OCR vs AI Document Extraction](/blog/ocr-vs-ai-document-extraction) — The business-audience comparison of OCR and AI approaches

- [Best PDF Parser Tools in 2026](/blog/best-pdf-parser-tools) — Dev and no-code tools compared

- [Agentic Document Extraction](/guides/agentic-document-extraction) — The next evolution beyond OCR and LLMs

- [Document Parsing API](/solutions/document-parsing-api) — Integrate extraction into your stack

- [PDF Table Extractor](/tools/pdf-table-extractor) — Test table extraction on your documents

- [What Is Intelligent Document Processing?](/guides/what-is-intelligent-document-processing) — IDP architecture explained

- [Parsli vs AWS Textract](/compare/textract) — Direct feature and pricing comparison

- [The Real Cost of Using LLMs for OCR](/blog/real-cost-llm-ocr-document-extraction) — How we cut document extraction cost by 60x with a two-phase pipeline

Related Articles

Best PDF Parser Tools in 2026 (Dev & No-Code)

A developer and non-developer comparison of the best PDF parser tools in 2026 — covering Python libraries, cloud APIs, and no-code AI platforms with honest trade-offs for each.

ComparisonOCR vs AI Document Extraction: Why OCR Alone Is No Longer Enough in 2026

OCR converts images to text. AI extraction understands what the text means. This comparison breaks down when each technology is the right fit — with real accuracy benchmarks, cost analysis, and practical guidance for 2026.

EngineeringThe Real Cost of Using LLMs for OCR (And the Architecture That Cut It by 60x)

We tried extracting data from 24-page scanned PDFs using Gemini 2.5 Pro. It cost $3.12 per document and failed half the time. Here's what we learned, the OCR models we benchmarked, and the two-phase pipeline that actually works.

Talal Bazerbachi

Founder at Parsli