- -Batch document processing means extracting data from hundreds or thousands of documents automatically — no manual opening, copying, or data entry.

- -Manual processing caps out at 20-30 documents per day per person. Beyond that, errors spike and throughput collapses.

- -Three ingestion methods: email forwarding (automated inbox), API uploads (programmatic), and folder watching (file system triggers).

- -Parsli handles batch processing end-to-end — ingest via email, API, or upload, extract data with AI, and export to Excel, JSON, Google Sheets, or via webhooks.

- -Scale without scaling headcount — process 10 or 10,000 documents with the same pipeline. Try free with 30 pages/month →

It's the end of the month. Your inbox has 347 invoices from 52 vendors. Your team needs to extract vendor names, invoice numbers, line items, and totals from every single one, match them against purchase orders, and enter the data into your accounting system. Last month, three people spent four days on this. Two invoices were duplicated, one was missed entirely, and a decimal error in a $12,000 invoice caused a payment dispute that took two weeks to resolve.

This is the batch processing problem. It's not that any single document is hard to process — it's that doing it 347 times, accurately, under a deadline, with inconsistent formats from dozens of different senders, is where humans break down. The cognitive load of reading, interpreting, copying, pasting, and verifying data for hours on end produces exactly the kind of errors that cause downstream problems.

This guide covers how to set up automated batch document processing — from simple email-forwarding pipelines to full API-driven workflows — so you can process hundreds or thousands of documents with the same effort it takes to process one.

20-30

Max documents/day per person (manual)

4.5%

Error rate at scale (manual entry)

1,000+

Documents/hour (automated)

97-99%

AI extraction accuracy

What is batch document processing?

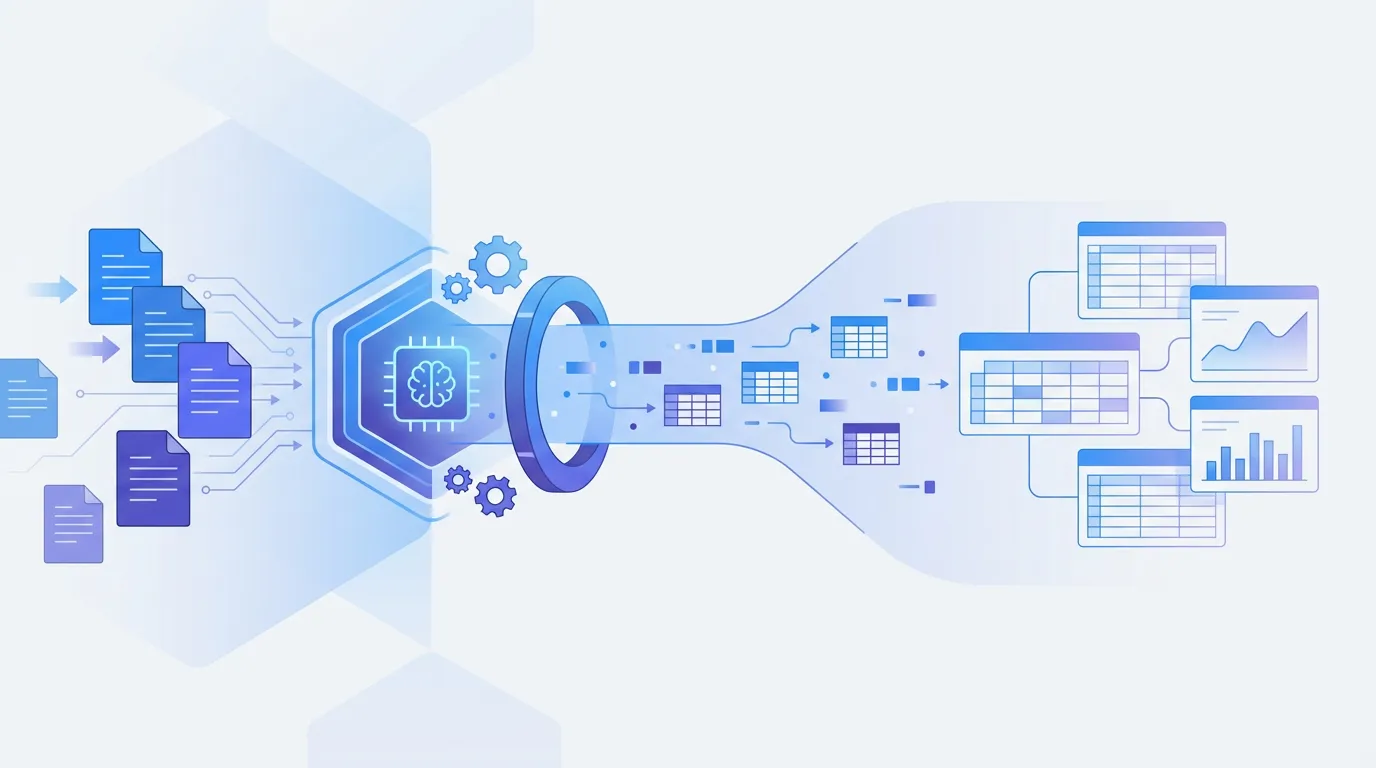

Batch document processing is the automated extraction of structured data from multiple documents in a single pipeline run. Instead of opening each document individually, reading it, and manually entering data, a batch processing system ingests a set of documents (PDFs, images, Excel files, emails), runs extraction on each one, and outputs structured data — JSON, CSV, Excel, or database rows — for all documents in the batch.

The key components are: ingestion (how documents enter the pipeline), extraction (how data is pulled from each document), validation (how accuracy is verified), and output (where the extracted data goes). A well-designed batch pipeline handles all four stages automatically, with human intervention only for flagged exceptions. The pipeline should process documents of varying formats — invoices, receipts, bank statements, purchase orders — without per-format configuration.

Why manual document processing doesn't scale

Manual processing works fine for 5-10 documents. At 50 documents, it's tedious. At 200+, it's unsustainable. Here's what breaks down when humans process documents at scale.

- Throughput ceiling — A skilled data-entry operator can process 20-30 documents per day (depending on complexity). To handle 500 documents per month, you need a full-time person dedicated to document processing.

- Error rates climb with volume — Fatigue, repetition, and context-switching drive manual error rates from 1-2% (fresh) to 4-5% (end of day). Over 500 documents, that's 20-25 errors — each one a potential payment discrepancy, compliance issue, or customer complaint.

- Format variation multiplies complexity — If your 500 documents come from 50 different vendors/senders, each with a different layout, the mental cost of switching between formats slows processing further and increases misread errors.

- No audit trail — Manual data entry leaves no trace of how data was extracted. When a number looks wrong months later, there's no way to trace it back to the source document without pulling the original file and re-reading it.

- Opportunity cost — Every hour spent on manual data entry is an hour not spent on analysis, vendor negotiations, exception handling, or process improvement. Your team's value is in judgment, not keystroke.

How to batch process documents: 3 methods compared

| Approach | Setup Complexity | Throughput | Format Flexibility | Cost | Best For |

|---|---|---|---|---|---|

| Manual processing | None | 20-30/day | High (human) | High (labor) | < 50 docs/month |

| Custom scripts (Python) | High | Fast | Low (per-format code) | Free + dev time | Single-format pipelines |

| AI pipeline (Parsli) | Low | 1,000+/hour | High | Free tier available | Any volume/format |

Method 1: Manual batch processing

The manual approach: open each document, read the relevant fields, type them into a spreadsheet or database form, move to the next document. Some teams add structure by creating templates — standardized spreadsheets where operators fill in specific columns. This adds consistency to the output but doesn't reduce the time per document.

- When it works: Low volume (under 50 documents/month), high-value documents requiring careful human review, or documents with formats so varied that no automated tool handles them reliably.

- When it breaks: Anything over 50 documents/month, tight SLAs, recurring deadlines (month-end close), or when error rates from fatigue become unacceptable. The math is simple: at 15 minutes per document, 200 documents is 50 hours of work.

Method 2: Custom Python processing pipeline

Build a Python script that uses libraries like pdfplumber, PyMuPDF, or openpyxl to read documents, extract data with regex and positional rules, and output structured CSV or JSON. Add Tesseract for scanned documents. Wrap it in a cron job or file-watcher to process documents automatically from a shared folder or FTP server.

- Pros: Free tools, full control over the pipeline, integrates with existing infrastructure, and handles predictable document formats well.

- Cons: Requires developer time to build and maintain, needs per-format extraction logic (one script per document type/vendor), breaks when document layouts change, limited accuracy on scanned documents, and no built-in validation or confidence scoring.

If you build a custom pipeline, architect it for failure: implement dead-letter queues for documents that fail extraction, log every extraction result with source file reference for auditing, and set up alerts for extraction error rates above your threshold. These operational concerns are as important as the extraction logic itself.

Method 3: AI-powered batch processing with Parsli

Best For

Teams processing 100+ documents/month across multiple formats — invoices, receipts, forms, reports — who need structured output without per-format configuration.

Key features

- Multiple ingestion methods: email forwarding, API upload, dashboard drag-and-drop

- AI extraction across all document types and formats automatically

- Built-in OCR for scanned documents and photos

- Confidence scores flag uncertain fields for human review

- Output to JSON, CSV, Excel, Google Sheets, or via webhooks

Pros

- + No per-format configuration — same pipeline handles invoices, receipts, forms, reports

- + Three ingestion methods: email forwarding, REST API, manual upload

- + Webhook triggers for downstream automation

- + 30 free pages/month to start

Cons

- - Cloud-based processing (requires internet connection)

- - Free tier limited to 30 pages/month

- - Very high volume (10,000+/month) needs a Business plan

Should you use Parsli?

For batch processing at any scale, Parsli replaces per-format scripts with a single pipeline. Try it free — upload a batch and see structured data in seconds.

AI-powered batch processing uses large language models to understand document layout and content semantically — reading each document the way a human would, but at machine speed. You define your extraction schema once, and the AI applies it to every document in the batch regardless of layout, format, or vendor.

Choose your ingestion method

Parsli supports three ways to ingest documents in batch: forward emails with attachments to your parser's dedicated email address (automated inbox), POST documents to the REST API (programmatic ingestion from your application), or drag-and-drop multiple files in the dashboard (manual batch upload).

Define your extraction schema

In Parsli's no-code schema builder, define the fields you need: vendor_name, invoice_number, date, total, line_items (as a repeating array). The same schema works across all document formats — the AI maps each document's layout to your schema automatically.

Review, validate, and export

Parsli processes every document in the batch and returns structured data with confidence scores. Review flagged fields (below your confidence threshold), then export the entire batch to CSV, Excel, JSON, or push to Google Sheets. Webhook integrations with Zapier and Make can trigger downstream workflows automatically.

Free Invoice Parser

Start batch processing invoices right now — upload multiple files and get structured data from each one. No sign-up required.

Try it freeProcessing 100+ documents per month? Parsli's batch pipeline handles any format at any scale — 30 free pages/month, no credit card.

Use cases for batch document processing

1. Month-end accounts payable processing

AP teams face a monthly crunch: hundreds of vendor invoices arrive in the last week of the month, and all need to be processed, matched to POs, approved, and entered into the accounting system before the books close. With batch processing, invoices are forwarded to Parsli's email address as they arrive throughout the month. By month-end, all invoice data is already extracted and structured — the AP team just reviews exceptions and approves payments. The processing bottleneck disappears entirely.

2. Expense report processing

When employees submit expense reports with attached receipts — hotel bills, meal receipts, taxi fares, conference fees — someone has to extract the amounts, dates, and categories from each receipt. At a company with 200 employees filing monthly expenses, that's thousands of receipts per month. Batch processing extracts receipt data automatically: employees submit photos or PDFs, the pipeline extracts amounts, dates, vendors, and categories, and the structured data feeds into the expense management system for approval.

3. Document digitization and migration

Organizations moving from paper-based to digital workflows often face a backlog of thousands of scanned documents — patient records, government applications, insurance claims, property documents — that need to be digitized and entered into a database. Batch processing turns this from a multi-month manual project into a pipeline: scan the documents, upload in bulk, extract structured data with AI, and import into the target system. Projects that would take a team months can be completed in days.

Best practices for batch document processing

1. Separate ingestion from extraction

Design your pipeline so documents are collected (ingested) into a queue independently of extraction. This means documents arriving via email, API, or file upload all land in the same processing queue. If extraction fails on one document, it doesn't block the rest of the batch. This architectural separation makes your pipeline resilient — a malformed PDF doesn't crash the entire batch run.

2. Implement confidence-based routing

Not every document needs human review — only the ones where extraction confidence is low. Set a confidence threshold (e.g., 90%) and automatically route high-confidence extractions to your output system while flagging low-confidence ones for human review. This hybrid approach gives you automation speed on the 80-90% of documents that extract cleanly, while maintaining accuracy on the difficult cases. Parsli's per-field confidence scores make this routing straightforward.

3. Build audit trails from day one

For every extracted document, store: the original file, the extraction timestamp, the extracted data, confidence scores, and any human corrections. This audit trail is essential for compliance (SOX, HIPAA, GDPR), error investigation, and continuous improvement. When a downstream discrepancy surfaces months later, you need to trace the data back to the source document and the extraction result — without an audit trail, you're guessing.

Common mistakes in batch document processing

1. Processing all documents with the same schema

If your batch contains invoices, receipts, and purchase orders, they need different extraction schemas. Invoices have line items and payment terms; receipts have merchant names and totals; POs have ship-to addresses and order quantities. Trying to extract all of them with one schema produces empty fields and missed data. Classify documents by type first (manually or with AI classification), then route each type to its appropriate extraction schema.

2. No error handling for failed documents

In any batch of 500 documents, a few will fail — corrupted files, blank pages, unsupported formats, or documents so poorly scanned that even AI can't read them. If your pipeline crashes on the first failure, you lose the entire batch run. Implement error handling that logs failed documents, continues processing the rest, and produces a report of failures for manual review. A 99% success rate on 500 documents is still 5 documents that need attention — make sure your pipeline surfaces them instead of hiding them.

3. Skipping deduplication

In email-based ingestion pipelines, the same document often arrives multiple times — forwarded by different people, included as a reply attachment, or re-sent after a correction. Without deduplication, you extract and process the same invoice twice, creating duplicate entries in your accounting system. Implement deduplication based on file hash (for exact duplicates) and extracted key fields like invoice number + vendor (for logical duplicates that may differ in scan quality or file format).

From manual bottleneck to automated pipeline

Batch document processing is the difference between a team drowning in monthly data entry and a pipeline that runs while people focus on higher-value work. The right approach depends on your volume and format variety — but for anything beyond 50 documents per month, automation pays for itself in time, accuracy, and sanity.

Start with email forwarding for the simplest setup: forward documents to Parsli as they arrive, and structured data is waiting for you when you need it. For programmatic control, use the document parsing API to build batch processing directly into your application. Either way, the path from 347 invoices to structured data shouldn't take four days — it should take minutes. Try Parsli free to see batch processing in action.

Stop copying data out of documents manually.

Parsli extracts structured data from PDFs, invoices, and emails — automatically. Free forever up to 30 pages/month.

No credit card required. · Or book a demo call

Frequently Asked Questions

What is batch document processing?

Batch document processing is the automated extraction of structured data from multiple documents in a single pipeline run. Instead of processing each document manually, a batch system ingests documents (via email, API, or file upload), extracts data from each one using AI, validates the output, and delivers structured results to your target system.

How many documents can Parsli process in a batch?

There's no hard limit on batch size. You can upload hundreds of documents at once via the dashboard, send them continuously via email forwarding, or POST them to the API as fast as your application generates them. Processing throughput depends on your plan — the free tier includes 30 pages/month, while Business plans support 10,000+ pages/month.

Can I batch process different document types together?

Yes, but you'll get better results by routing different document types to different extraction schemas. Invoices, receipts, and purchase orders have different fields — using a dedicated schema for each type ensures you capture all relevant data. Parsli supports multiple parsers, so you can set up separate extraction schemas for each document type.

How does email-based document ingestion work?

Each Parsli parser has a dedicated email address. Forward emails with document attachments to that address, and Parsli automatically extracts data from the attachments. This is the simplest batch processing setup — no code, no API integration. Just set up email forwarding rules in your inbox, and incoming document attachments are processed automatically.

What happens if a document fails during batch processing?

Parsli processes each document independently — if one fails (corrupted file, blank page, unsupported format), the rest of the batch continues processing normally. Failed documents are flagged in your dashboard with error details so you can address them separately without re-running the entire batch.

Can I trigger downstream workflows after batch processing?

Yes. Parsli supports webhooks that fire after each document is processed, sending the extracted JSON to any URL you specify. This integrates with Zapier, Make, custom APIs, and any webhook-compatible system. You can trigger accounting entries, CRM updates, Slack notifications, or Google Sheets appends automatically after each extraction.

How do I handle documents that need human review?

Use confidence-based routing. Parsli assigns confidence scores to every extracted field. Set a threshold (e.g., 90%) and automatically accept high-confidence extractions while routing low-confidence documents to a review queue. This hybrid approach gives you automation speed on most documents while maintaining human oversight on uncertain extractions.

Related Resources

More Guides

How to Extract Line Items from Invoices Automatically

Learn 3 methods to extract line items from invoices — manual, Python, and AI-powered. Compare accuracy, speed, and cost for each approach.

Document ExtractionHow to Extract Data from Bank Statements (PDF to Excel)

Learn how to extract transactions, balances, and account details from bank statement PDFs. Compare manual, Python, and AI methods.

Data ConversionHow to Convert Receipts to Spreadsheet Data

Learn how to convert paper and digital receipts into structured spreadsheet data. Compare scanning apps, OCR tools, and AI extraction.

Talal Bazerbachi

Founder at Parsli